AI means a lot of things to a lot of people. Usually what it means is not very well thought out. It is felt, it is intuited. It is either adored, worshipped or deemed blasphemous, profane, to be feared.

In this article, I explore what society at large really means by artificial intelligence as opposed to what researchers or computer scientists mean. I want to clarify for the non-technical audience what can realistically be expected from AI, and more importantly, what is just unrealistic pie-in-the-sky speculation.

I am worried that blind fear — or in some cases worship — of AI is being used to manipulate society.Politicians, business people, and media personalities craft narratives around AI that stir up deep emotions that they use to their advantage. Meanwhile the truth is only to be found in dense technical literature that is out of reach for the ordinary person.

What do we mean by intelligence?

Is intelligence a purely human characteristic? Most people will consider some dogs to be more intelligent than others. Or dogs to be more intelligent than Guinea pigs, so clearly intelligence is something that an animal can have.

If a dog can have intelligence, can a bird? How about an earthworm, or a plant? Where do we draw the line? There are many definitions of intelligence, but one that I like (from Wikipedia) is:.

“the ability to perceive or infer information, and to retain it as knowledge to be applied towards adaptive behaviors within an environment or context“.”

Seems reasonable? Some people argue that by this definition even plants can be intelligent So why not computers?

This is the intelligence to which AI researchers generally refer. Yet the reality is that when average people speak of or think about AI, they are not thinking about plants or animals. Most people would not get too excited one way or another about the idea that a computer might be able to operate at the level of a plant, a guinea pig, or even a dog.

Equally, people do not really care of a machine can do what its creator intends it to do; what it is programmed to do. Handguns are created to kill people, and they do, yet no one worries that handguns will develop consciousness and kill all the humans – as they are “programmed” to do.

If we are going to be honest, we must admit that what the average person means by “artificial intelligence” is really “artificially like a human”, and what they worry about (or celebrate) is the possibility that AIs could spontaneously come up with motivations of their own.

The human factor

Motivation is key; human motivation has a different quality than that of all other animals, and this difference is arguably what makes humans unique.

The great mathematician and computer pioneer Alan Turing had suggested that instead of asking what it is to be human — which he considered a practical impossibility — we could simply say: “if it walks like a duck and quacks like a duck.” .

Turing’s proposal was that if a computer program could pass a written interview without the interviewer realizing that they were interacting with a computer, then the computer could be said to be for all practical purposes an artificial intelligence that was human in character. However this does not really answer the question of whether a computer program could make the same kind of complex decisions in real time that a human does, whether it can have motivations that were not preprogrammed into it.

Above all, a written interview encompasses only a very small part of human experience. We live in a world of action, and many actions have very real and immediate consequences. The decision of whether or not to walk down a dark alley is a complex one, and many of the factors that influence our decision are unknown.Every day, we make decisions without knowing all the data, and the fact that the human race survives and thrives is indisputable proof that on the whole we are amazingly successful at doing this — if one defines success as survival.

Value making machines

How we pull this trick off is a matter of debate. But most serious scholars would agree that it is almost unavoidably the case that the human propensity for assigning value is at the heart of things.

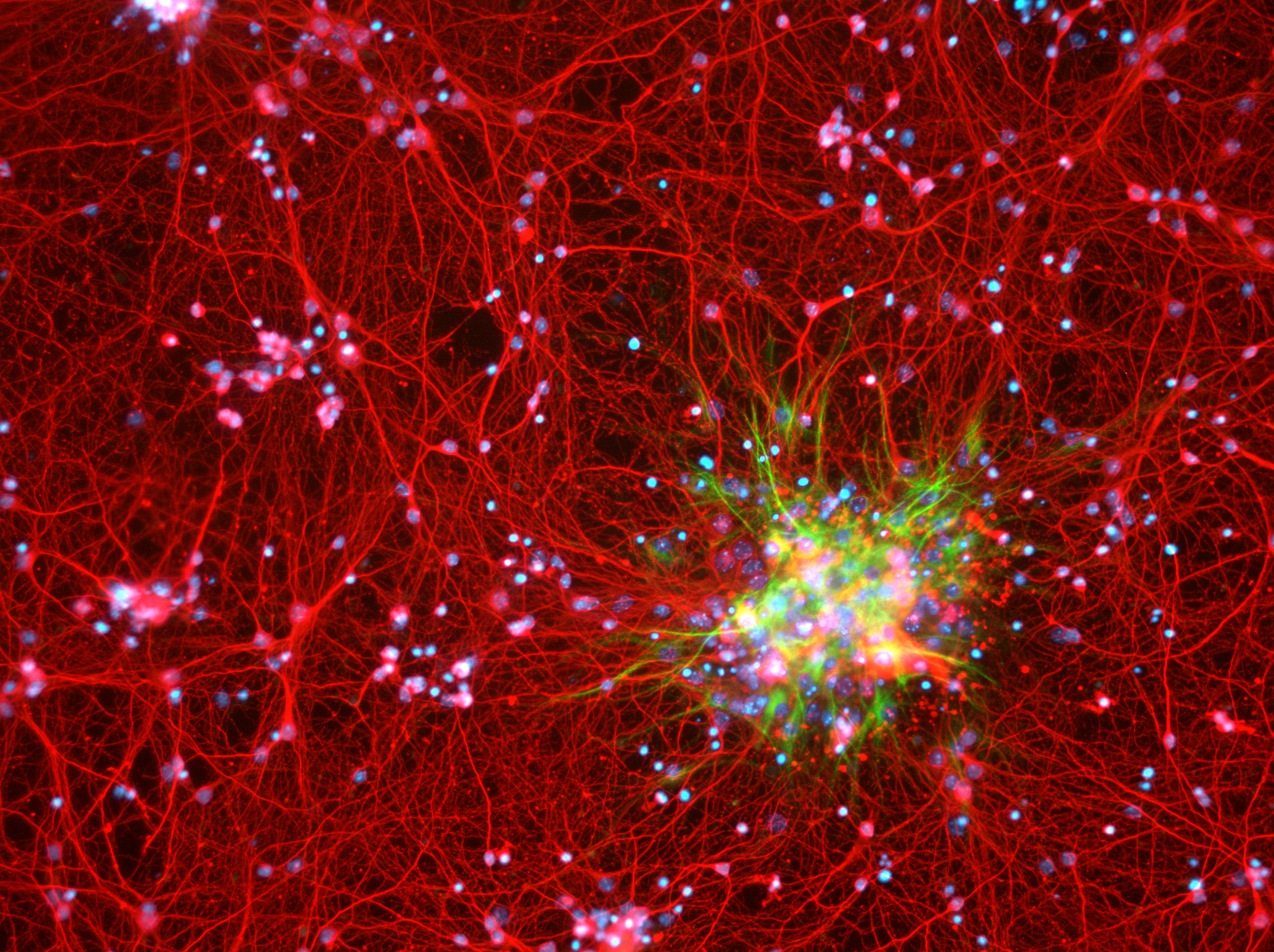

Humans are “value making machines” and we do it at a speed that no computer network could hope to match. If you hear an unexpected sound, you will have a reflex action in 170 milliseconds. To put this in perspective: it is 30 milliseconds faster than Google’s recommended time for the first byte of data to hit your browser after you click on a link, and Google recommends a 500 millisecond time for the page to finish loading after the first byte.

Loading a web page is a trivial action for a computer, and still it takes more than twice as long for the computer to do this as it does for your brain to figure out whether an unexpected noise is threatening (a snapping twig or a metallic sound) or delightful (ice cream truck bells or a child’s laughter) — which by the way; it does instantly and with virtually no data at all.

Identifying something as threatening or delightful is what we mean by assigning value, and it is something that we do instinctively, automatically, “without thinking”. Yet without doing it, we would be unable to “think” as we know it.

Say you are thirsty; you must solve the problem of what to drink, then you must solve the problem of getting it.

Suppose your choice of what to drink is between pond water and fresh sparkling well-water. You are thinking right now that obviously we want the well-water. But what if you are an escaped prisoner, and the pond water is safely out of site in the forest, and the well is in a town square where you might be seen? Now you are thinking pond water. You are motived by thirst, but you are more highly motivated to remain free.This is because the value you place on staying free is much higher than the value you place on the freshness of your water.

Human motivation is completely dependent upon values. A thirsty dog will simply drink from the first available water it finds, because its motivation is survival, unaffected by values.

Let’s consider an example unrelated to survival: would you push a button that would definitely kill one person, or would you refuse to push it even if it meant that 10 people might die. Your thinking about this question is not in any related to your own physical survival, yet it has a moral urgency that few people could deny. Your thinking will be entirely directed by the values that you assign while evaluating your options.

Where do these values come from?

The truth is that we don’t know.

For some it is a question faith. Sincerely religious people belive that our values are a reflection of God’s will.The few people who truly believe in evolutionary theory and all of its implications would say that our values are that/those which allow(s) us to survive and which consequently perpetuate itself/themselves.

Almost everyone else holds the view that values are a self-evident truth. That we have these values because we all have them, that it is just obviously so. As Sam Harris puts it:

“When we really believe that something is factually true or morally good, we also believe that another person, similarly placed, should share our conviction”.

As far as a claim to understanding goes, this is weak tea. It provides no useful grounding for explaining why some values are culturally dependant, while others seem to be universal or nearly so. It does not explain the origin of values, and so for the most part, the religious and the evolutionists notwithstanding, we have no useful explanation of how values work.

And it is in values that we find the consciousness gap. In the above example you are motivated by thirst, a biological factor. An AI, equipped with the appropriate sensors, could also have that sort of motivation, for example the need to charge a battery that is running low.

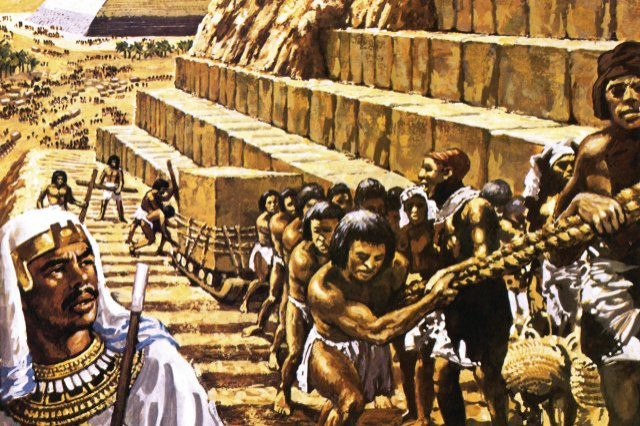

But what motivates you to take a picture of something beautiful to share with your loved ones, or to argue politics with your friends? What motivates you to watch a scary movie or to learn a sport? What about the button pushing example? On what basis could a computer make a decision like that without a human first providing values such as one life is worth less than ten, or that killing is wrong no matter what the circumstances. Our morality, our ability to assign value in the blink of an eye, has evolved over millions of years. How would a computer — in a single generation mind you, because they have no natural reproductive mechanism — arrive at a moral grounding on its own, without a blueprint first being provided by a human?

Would we even want it to? Human history suggests that tens of thousands of generations were required before we arrived at what we now consider civilized behaviour. Would we really want a race of AIs to make the same slow and painful march towards civilization?

Sure we could give them a “jump-start” but then we are back to the consciousness gap: if we program in the jump start, basically programming in the values of some human, can the AI be said to truly have human-like consciousness, capable of spontaneous motivations? As you may have guessed; I believe the answer is probably not.

Wherein the danger

This is not to say that AI is totally and completely uninvolved with anything dangerous or any area for concern. The use of AI is no more and no less subject to the law of unintended consequences than any other field of human endeavour. We can be absolutely certain that the use of AI for things such as curating the content of your social media feed will lead directly to unforeseen results that many people do not like.

This is not due to to any inherent quality of AI. It is the nature of the world in which we live. It is inherent to human decision making, and whether that decision is to import Cane Toads into Australia (to control pests), or to use AI to make automated stock purchases, the overwhelming probability is that catastrophe will ensue.

Party tricks

We cannot imagine trying to present the “button problem” to a dog. We have no reason to believe that a dog would have any moral framework within which to make the problem relevant, no reason to believe that dog would care one way or another.

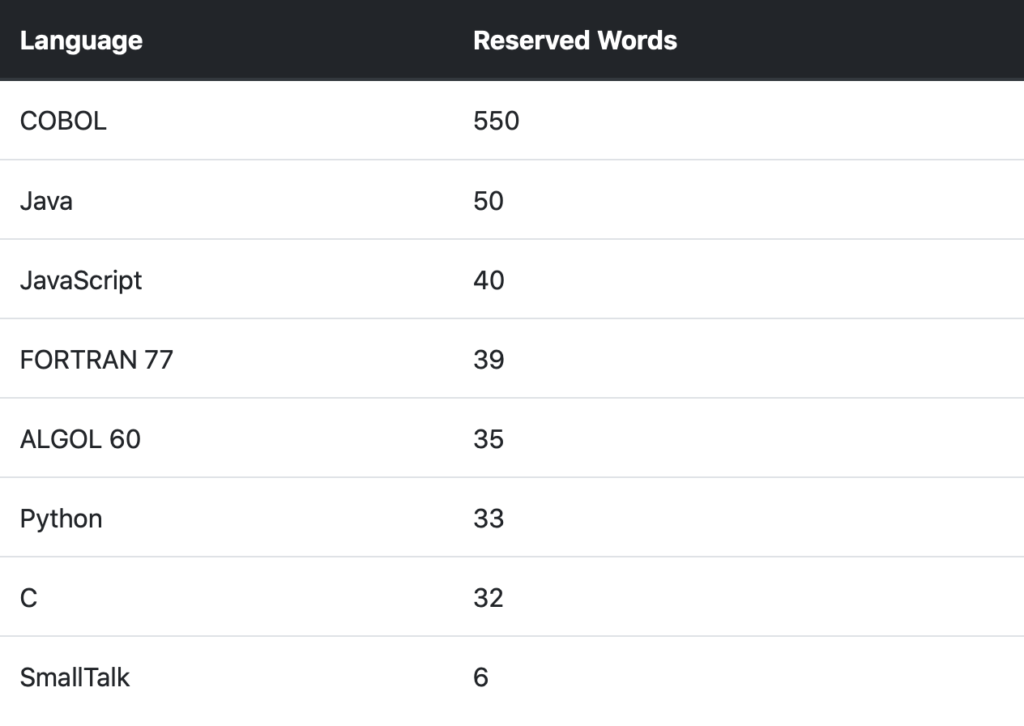

We have no more reason to believe that we could present this problem to an AI than to a dog. We have no evidence at all that any AI anywhere has a moral framework guided or informed by the sort of spontaneous value-making that marks humans. It is irrelevant that computers can calculate faster than a human or can predict certain classes of problems faster or better. Winning a chess game against a human is a landmark in programming and computer science, but it is of little consequence in the real world. The ability to calculate a very large number of permutations within a very strict, and very limited, set of rules is in no way indicative of general intelligence or the ability for a computer to develop consciousness.

Computers being able to triage and diagnose medical conditions as well as — or possibly better than — human doctors is also not quite as impressive as it sounds. To be sure it is very helpful to automate checklists and decision trees; in a medical emergency a computer is faster than cracking a book. And it is true that without these checklists, humans are prone to all sorts of perceptual and cognitive biases, but you would be very wrong if you assumed that the AI that can do triage can also decide if the shadow in front of your car is a cardboard box or a child on a tricycle.

Sensationalism sells. The sky is always falling, and the failure of last week’s prophecies of Armageddon (the “Y2K bug” or New York City’s West Side Highway under water by 2019) never seem to slake people’s thirst for this week’s prediction of impending doom. The same can be said for sensationalist idealism. The repeated failure of utopian ideologies to actually produce the predicted earthly paradise seems in no way to hinder the convictions of the true believers that this time.

AI is nowhere near as exciting, or mysterious, or dangerous, or magnificent, as it promoters and detractors would have you think. It has become for the most part a branch of the mathematics of probability, glorified actuarial work. It has all the sex appeal of an otaku or anorak lecturing on their favorite subject. It is a one trick pony.

In short: it is nothing to worry about or get excited about. We return you to our regularly scheduled program.